Talk to knowledge graph – sparql-tool

Use LLM.: Hallucinations (sometimes good for creativity), Outdated knowledge, no access to your data ( trained on public knowledge).

Solutions:

Fine tuning – retrain the model on domain data

RAG –

Search: look things up in real time. Claude code looks at searched doc.

Vector embeddings: semantic similarity search over documents.

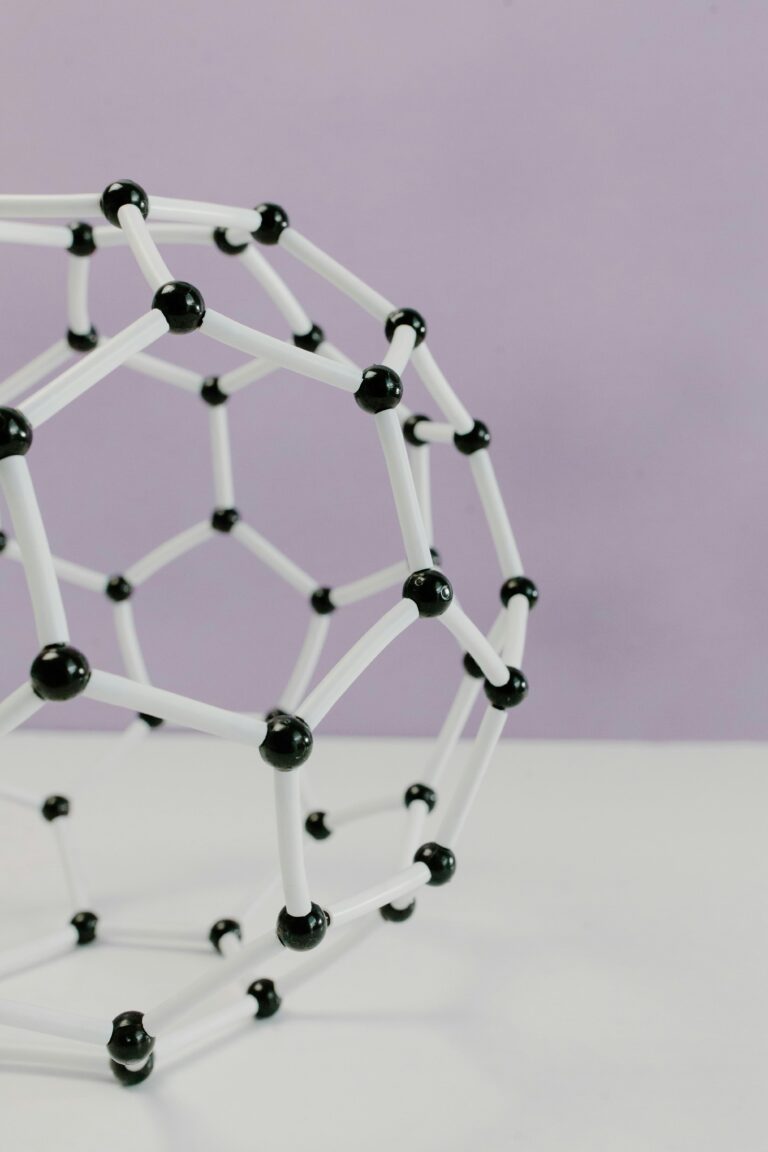

Knowledge graphs: structure queryable machine readable knowledge

Two main flavors:

Property graphsQ: nodes and edges carry key value properties

Nodes and edges carry properties – Neo4q, TigerGraph

RDF graphs: everything is triple – Subject – predicate – object

Engines

Datasets:

Everthing is URI

So for example for age – do not put the exact age – put the date of birth and then compute the age.

The RDF ecosystem:

RDF

OWL (ontology language

Triple stores – databases for RDF

Ontologies (formal schemas

SPARQL – language for RDF

Problem with RDF

Low traction for academia

steep learning curve

SPRQL is tedious by hand

Ontologies are complex

LLM models have this formal knowledge baked in (claude etc)

They understand RDF, OWL, SPARQL, ontologies

How to use them effectively.

Sparql-tool

Democratizes RDF

Three components : skill, agent, CLI tool

CLI does not need LLM

This is replacing MCP.

Websearch sometimes does not work – because there was semantic loss on what was being searched for

By the way, people are complaining because people aren’t going to websites, they are going to LLM’s.

DBPedia KG – they extract wikipedia very often…

Try Kevin Bacon algorithm and show hops is one way to test things.

Biotech : Uniprot

Uniprot KG. (they publish about proteins)

What proteins are associated with Alzhiemer’s

So then the better question is tho what protein intearctis with what proteins ..Takes long.

Another one is used – IntAct…

Have to know where the dataset came from ?

Pokemon: Knowledge graph.

Community graphs – has 100k entries. Used that as such.