A very traditional problem solving method is the following: given a set of features or variables, can we understand the features to form a conclusion. This could be something like a treatment strategy wherein the strategy is built on a series of data and then ingesting the data helps make a conclusion. However, an equally complicated question is that given that there are many factors that affect the decision – is there one or few factors that play a key role and changing those factors will affect the decision. Which means that if there are many factors that would affect the decision, can you change/alter that one factor and that would make a change to that decision.

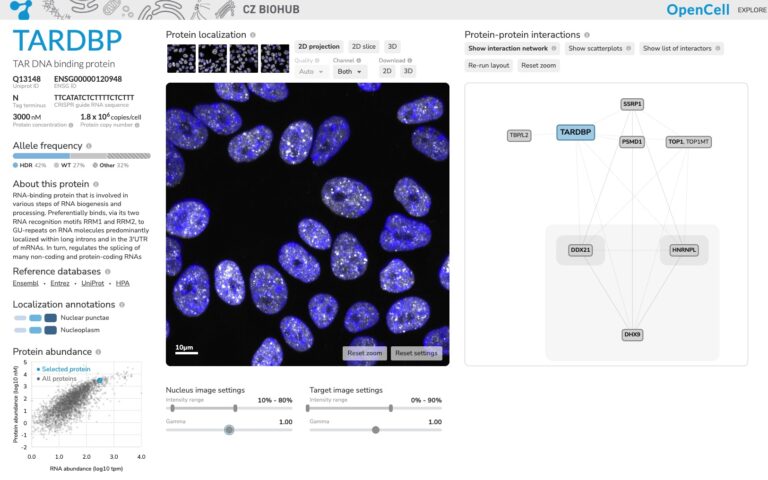

Using AI to ingest a variable factors and then come up with an answer is usually opaque. And therefore finding the key factor is the basis of xAI -explainable AI. It is also called feature attribution. The method to figure this out is SHAP (Shapley addition explanations). One good website for a book chapter is here : https://christophm.github.io/interpretable-ml-book/shap.html